A group of researchers led by Jamie Simon has proposed that a formal scientific theory of deep learning is currently emerging, according to a new paper published on arXiv.org.

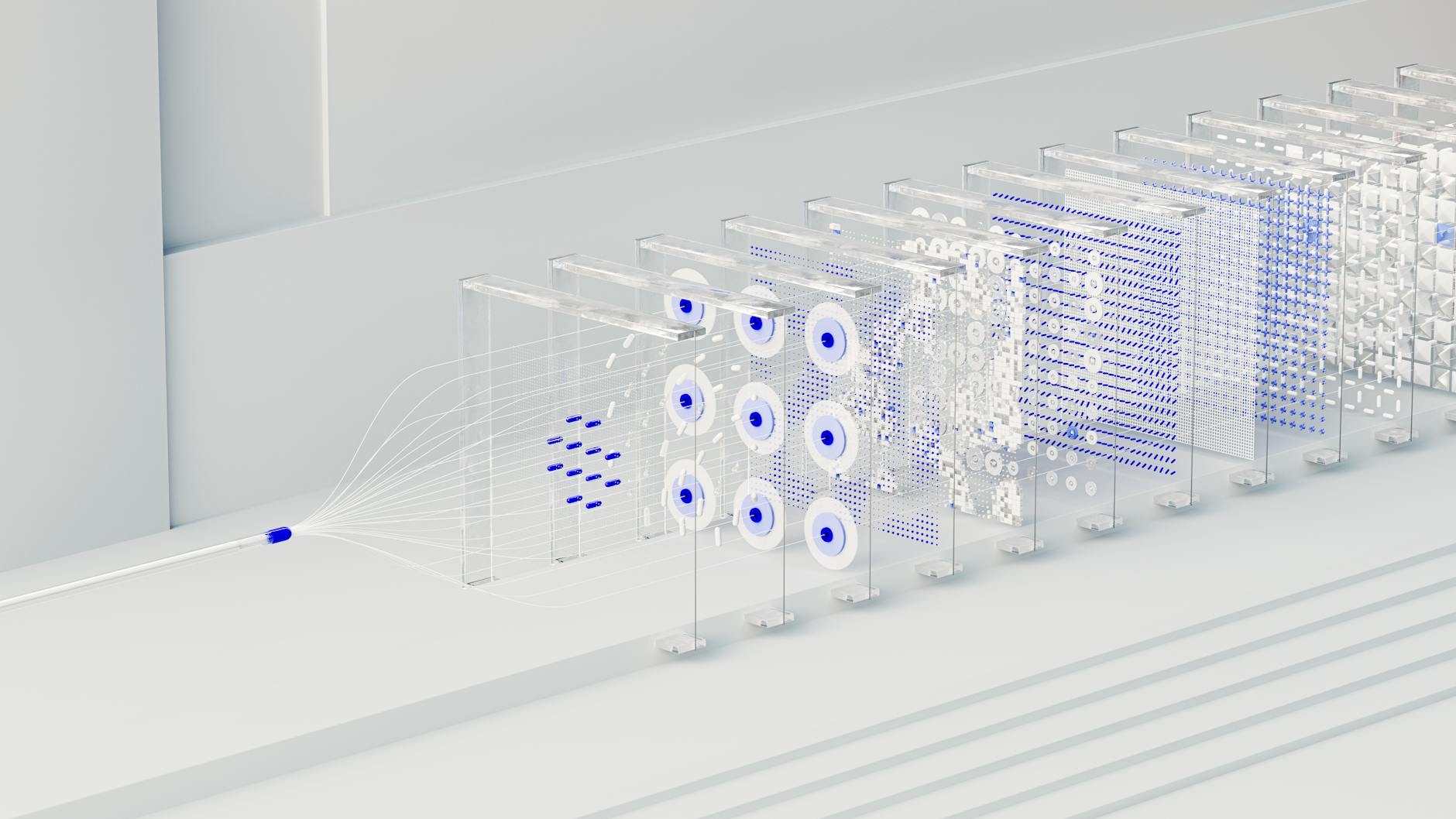

The study, titled "There Will Be a Scientific Theory of Deep Learning," argues that rather than being a black box of unpredictable computations, neural networks are governed by an emerging framework the authors call "learning mechanics."

In the paper, the authors claim this new theory characterizes the essential properties and statistics of training processes, hidden representations, and the final performance of neural networks.

To support this claim, the researchers identified five distinct pillars of ongoing research that point toward a unified theory. These include solvable idealized settings, tractable limits, simple mathematical laws for macroscopic observables, theories of hyperparameters, and universal behaviors shared across different systems.

A shift toward mechanics

The researchers suggest that the emerging field is best understood as a mechanics of the learning process. This perspective focuses on the dynamics of training and the description of aggregate statistics.

"We argue that the emerging theory is best thought of as a mechanics of the learning process," the authors state in the paper's abstract.

The paper also highlights a predicted symbiotic relationship between this "learning mechanics" perspective and the field of mechanistic interpretability. This connection could help researchers better understand how specific internal components of a model contribute to its overall function.

Beyond proposing a new framework, the authors addressed common skepticism regarding whether a fundamental theory of deep learning is even possible or necessary. The 41-page document concludes with a roadmap for future research directions and advice for new researchers entering the field.

Additional resources and open questions related to the study are hosted at the learningmechanics.pub website.