Hugging Face has released a technical blueprint for developers looking to integrate local artificial intelligence into Chrome extensions using the Transformers.js library.

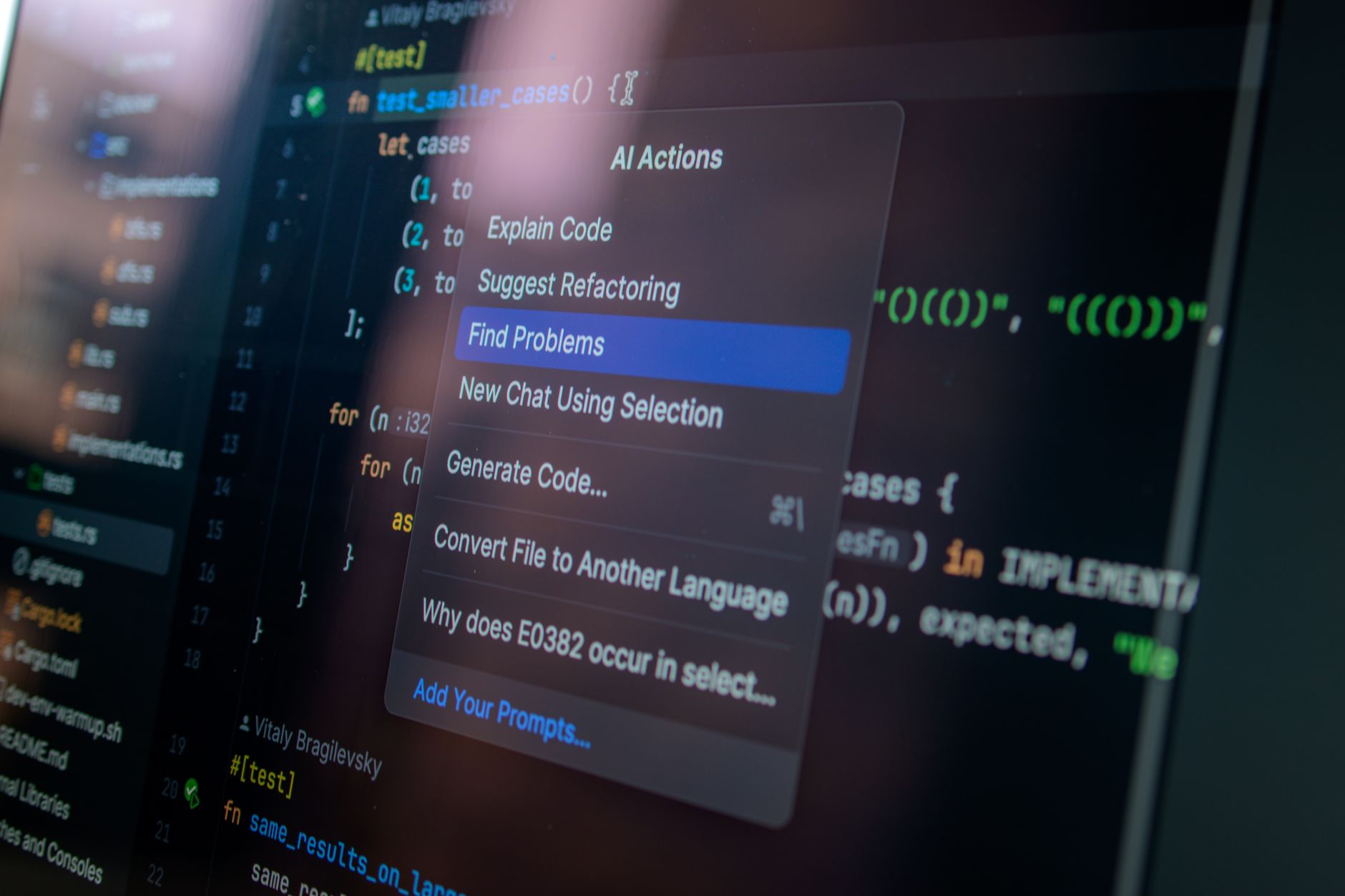

The guide, published by developer Nico Martin, details the architecture behind a recently released browser extension powered by the Gemma 4 E2B model. The project serves as a reference for running AI features locally under the constraints of Chrome's Manifest V3 runtime.

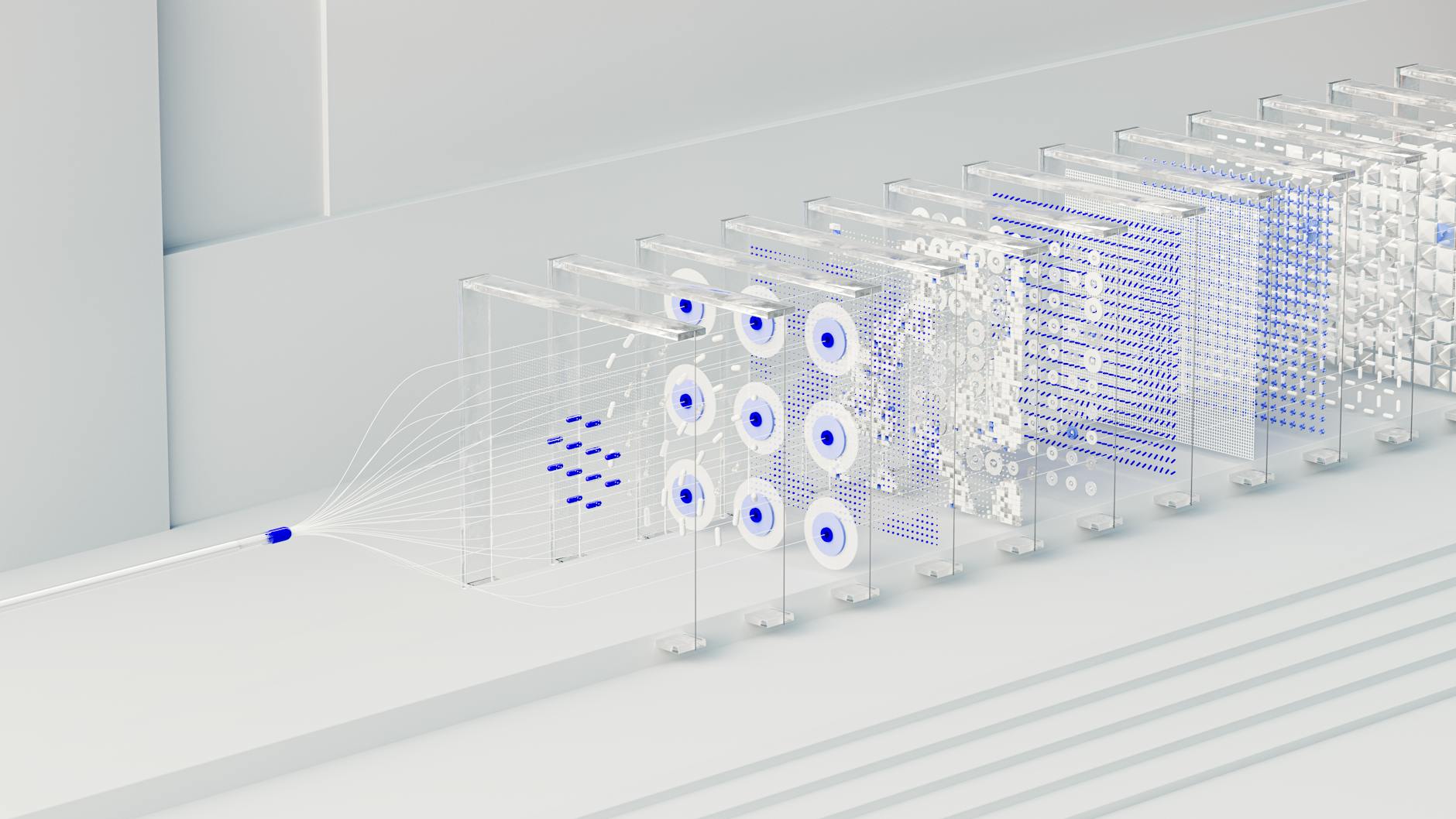

According to the documentation, the proposed architecture relies on a three-part system: a background service worker to host models, a side panel for the chat interface, and a content script for interacting with web pages.

Optimizing for Manifest V3

Implementing AI in a browser environment requires a strict division of labor to maintain performance and security. The guide specifies that the background service worker acts as the 'control plane,' managing the agent lifecycle, model initialization, and tool execution.

This design decision aims to keep the user interface responsive while avoiding the heavy resource cost of duplicate model loads. The side panel serves as the interaction layer for chat input and output, while the content script functions as a bridge for DOM extraction and page highlighting.

'The key design decision is to keep heavy orchestration in the background and keep UI/page logic thin,' Martin wrote in the technical report.

Because the runtimes are separated, a robust messaging contract is required to facilitate communication. In the demonstrated project, the background worker holds the conversation history. When a user interacts with the UI, the side panel sends events like 'AGENT_GENERATE_TEXT' to the background, which then performs the inference and emits updates back to the interface.

This architecture specifically addresses the limitations of Manifest V3, such as strict rules regarding service worker lifecycles and memory management. By centralizing the Transformers.js engine in the background script, the extension can maintain persistent AI capabilities even when the side panel is closed.