DeepSeek AI has released a preview of its DeepSeek-V4 series, introducing two massive Mixture-of-Experts (MoE) language models capable of processing one million tokens of context length, according to documentation on Hugging Face.

The lineup features the DeepSeek-V4-Pro, a heavyweight model boasting 1.6 trillion total parameters with 49 billion activated, and the DeepSeek-V4-Flash, a more streamlined 284 billion parameter model with 13 billion activated parameters.

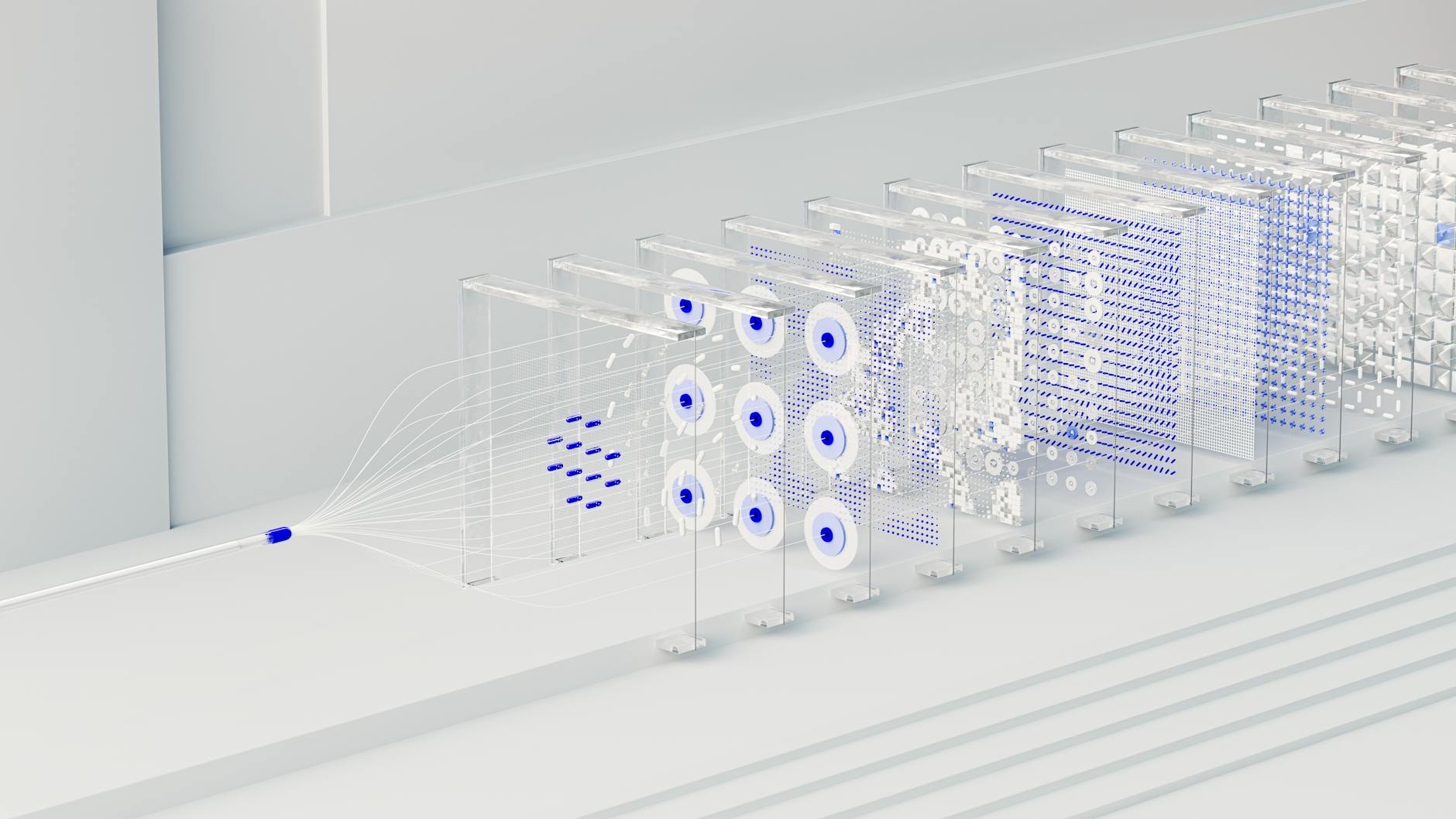

To manage the massive context window, the developers implemented a hybrid attention architecture. This system combines Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to optimize long-context efficiency.

According to the technical report, the DeepSeek-V4-Pro requires only 27% of the single-token inference FLOPs and just 10% of the KV cache compared to its predecessor, DeepSeek-V3.2.

Advanced training and optimization

The developers utilized the Muon optimizer to achieve faster convergence and greater training stability during the pre-training phase. This phase involved training both models on more than 32 trillion diverse and high-quality tokens.

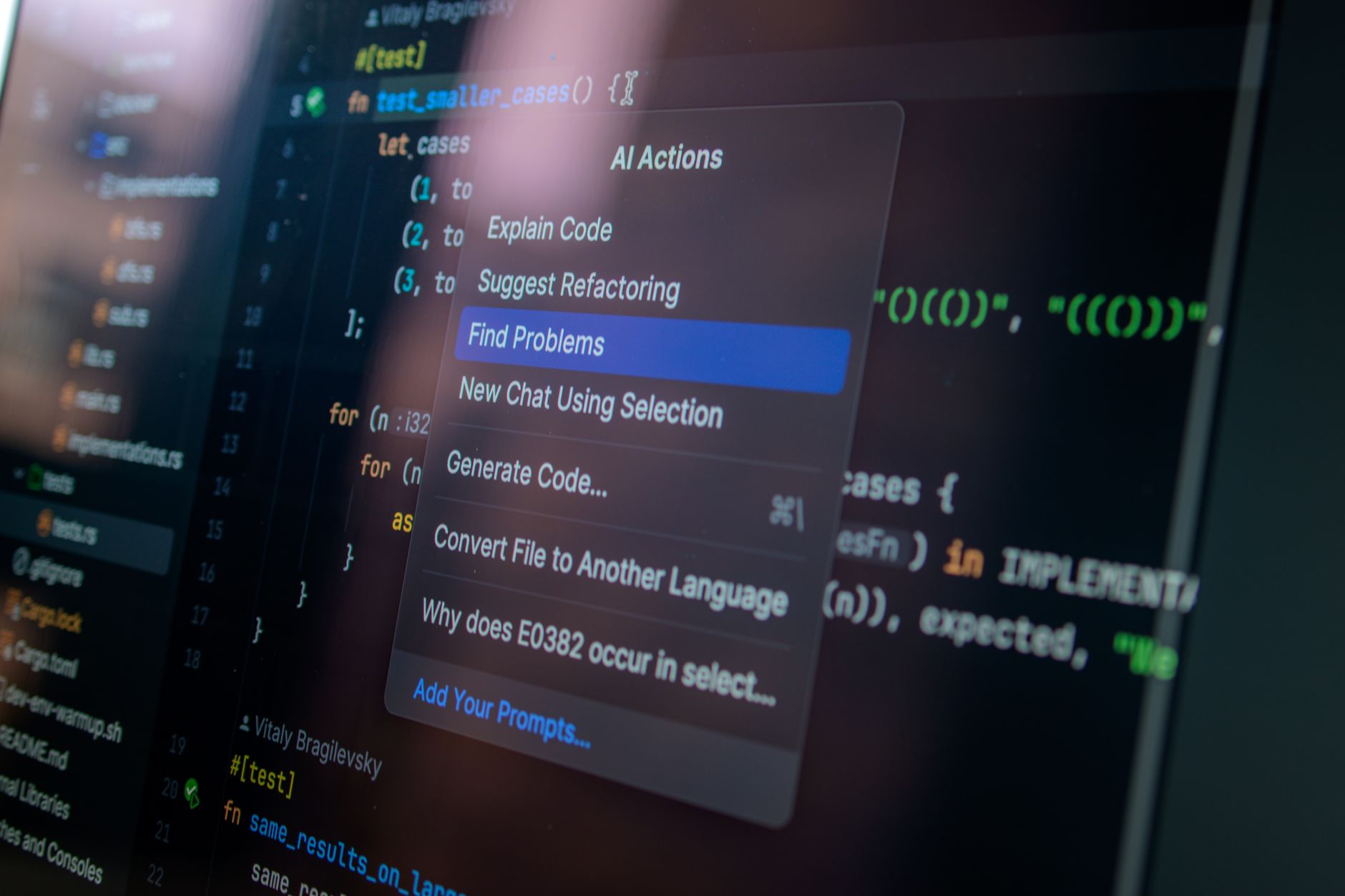

The post-training process follows a two-stage paradigm. DeepSeek first cultivates domain-specific experts through Supervised Fine-Tuning (SFT) and Reinforcement Learning with Group Relative Policy Optimization (GRPO).

Following this, the team uses on-policy distillation to consolidate these distinct proficiencies into a single, unified model. This method allows the models to integrate specialized knowledge across various domains.

DeepSeek claims that the DeepSeek-V4-Pro-Max mode, which represents the maximum reasoning effort for the Pro version, establishes itself as the best open-source model available today. The company reported that it significantly advances knowledge capabilities and bridges the performance gap with leading closed-source models in reasoning and agentic tasks.

While the Flash version is smaller, the developers noted that DeepSeek-V4-Flash-Max can achieve reasoning performance comparable to the Pro version when provided with a larger thinking budget. However, the smaller parameter scale of the Flash model naturally places it behind on complex agentic workflows and pure knowledge tasks.

Technical specifications for the release include a mix of FP4 and FP8 precision for the MoE expert parameters. The models are currently available for download via Hugging Face and ModelScope.