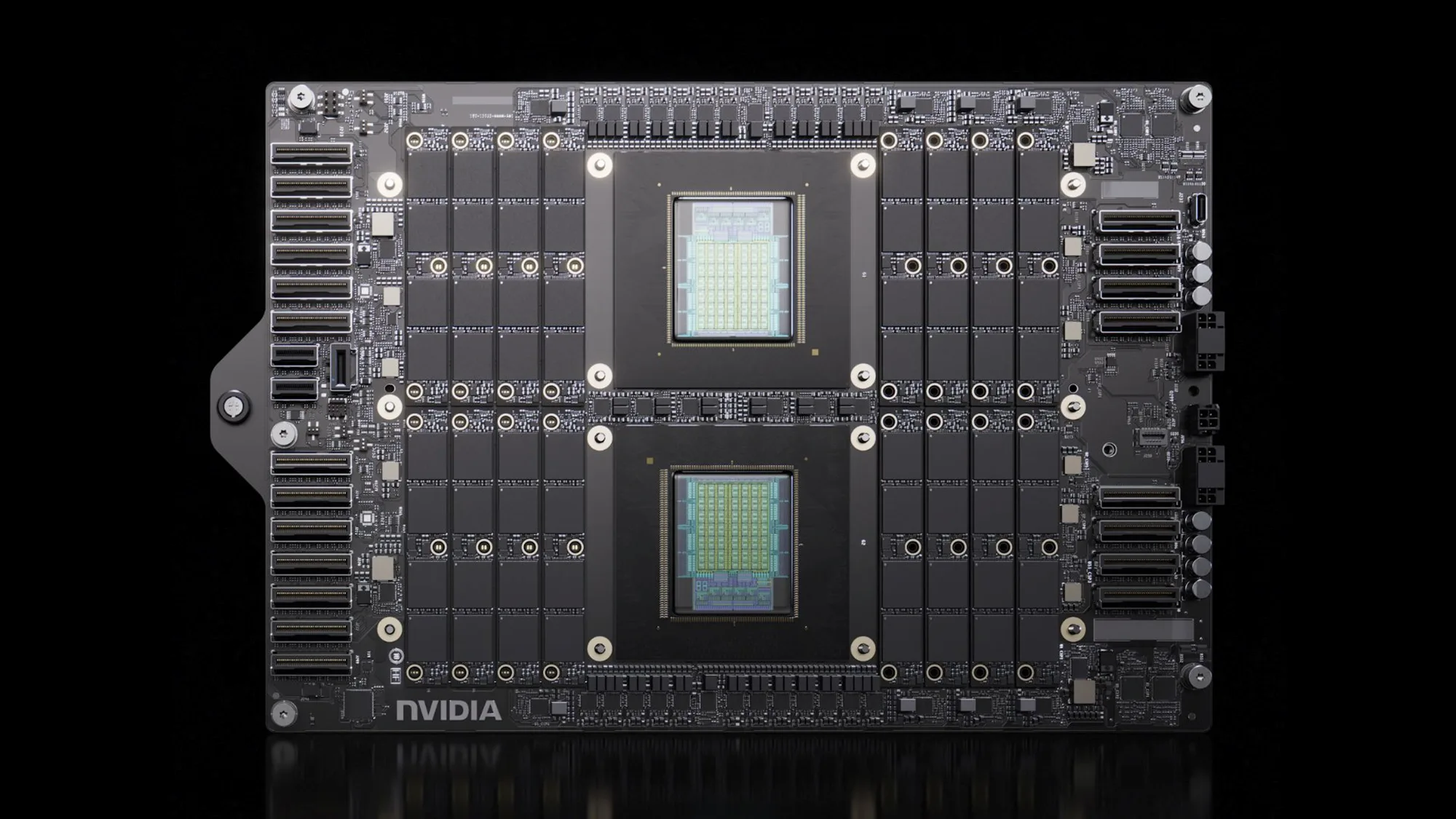

Nvidia is utilizing artificial intelligence to accelerate the planning and design of its next-generation graphics processing units (GPUs), according to PC Gamer. The company is integrating machine learning into its hardware engineering workflows to streamline the development of future chip architectures.

The move highlights Nvidia's strategy to use its own AI expertise to optimize the production of its silicon. By applying AI to the design phase, the company aims to reduce the time-intensive processes involved in chip architecture planning.

Streamlining semiconductor engineering

The implementation of AI allows Nvidia to automate much of the complex simulation work required for modern GPUs. Engineers can use these tools to predict how different transistor configurations will perform under various workloads before physical prototypes exist.

This approach addresses the growing difficulty of designing chips at smaller nanometer scales. As architectures become more complex, the computational overhead of manual design increases significantly.

PC Gamer reports that the company is leaning heavily on these AI-driven methods to speed up the design cycle. This automation helps the company navigate the intricate thermal and power management requirements of next-gen hardware.

Using AI for hardware planning also assists in optimizing the placement of components on the die. Precise placement is critical for maintaining high clock speeds and reducing latency in high-performance computing environments.

The integration of AI into the engineering pipeline reflects a broader trend in the semiconductor industry. Companies are increasingly turning to automated design tools to manage the massive datasets generated during the lithography and etching processes.

Nvidia’s focus on AI-assisted design allows for more rapid testing of architectural permutations. This capability is intended to shorten the development gap between hardware generations.

The design process involves complex decisions regarding memory bandwidth, cache hierarchy, and instruction sets. By leveraging AI, Nvidia can analyze millions of potential configurations to find the most efficient balance of performance and power consumption.