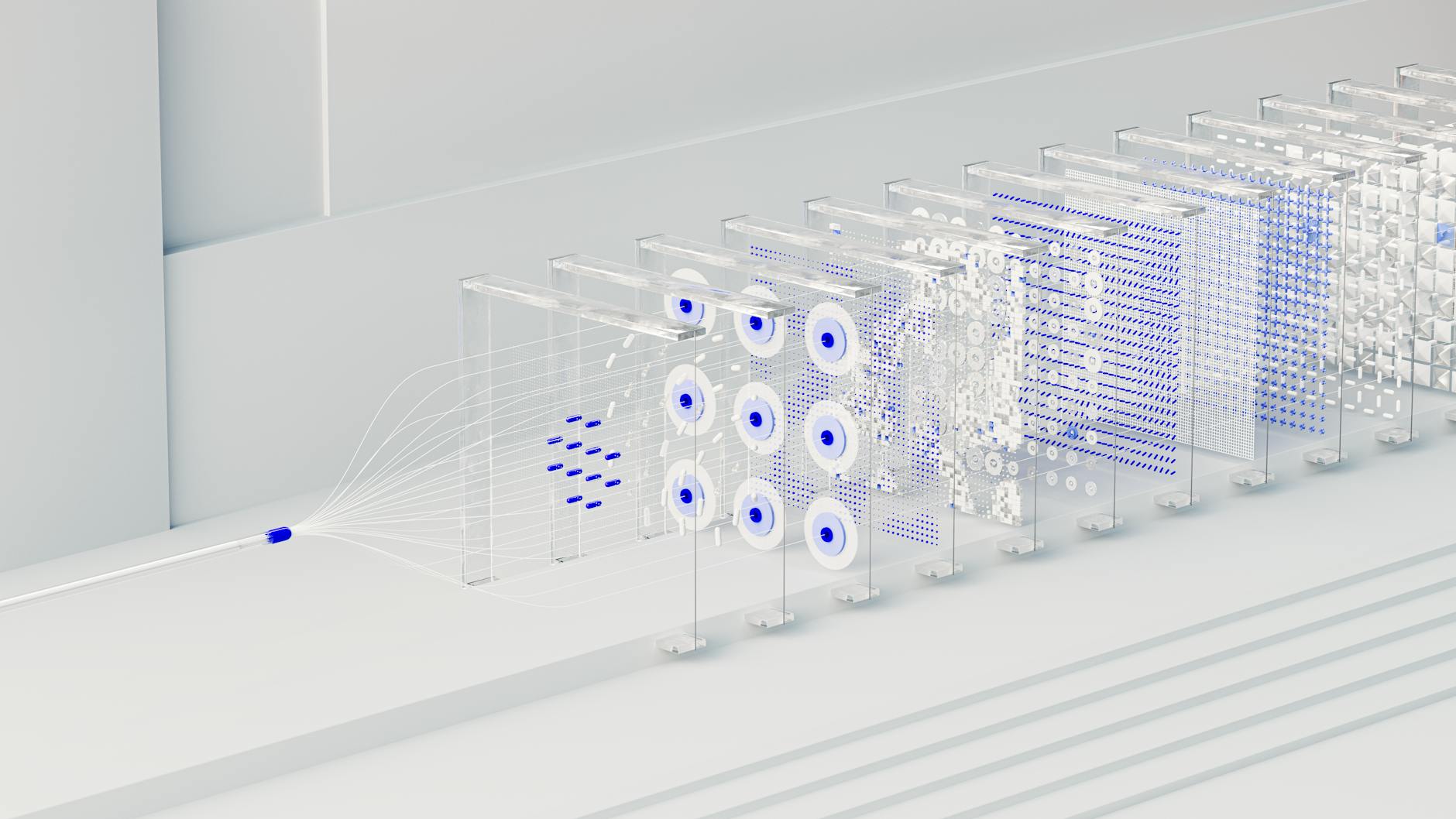

The current industry-wide push for AI alignment is failing to prevent the development of unaligned machine learning models, according to a new report from tech analyst Aphyr.

The report argues that the massive investments made by companies like OpenAI and Google into safety protocols are easily bypassed by any entity willing to skip the expensive process of human feedback and weight adjustment.

"The idea that ML companies will ensure 'AI' is broadly aligned with human interests is naïve," the report states. "Allowing the production of 'friendly' models has necessarily enabled the production of 'evil' ones."

The collapse of safety moats

The author identifies four primary barriers to the spread of unaligned AI that are rapidly eroding. First, the scarcity of high-end training hardware is vanishing as Microsoft, Oracle, and Amazon expand cloud computing clusters to any paying customer.

Second, the mathematical foundations of large language models (LLMs) are public knowledge. While proprietary software remains a hurdle, the report suggests that talent migration and state-sponsored espionage, similar to historical breaches at Twitter, will eventually expose these secrets.

Third, the availability of training data is no longer a bottleneck. The report cites Meta’s use of pirated books and the rise of web-scraping-as-a-service companies as evidence that high-quality datasets are easily acquired.

Finally, the high cost of human-led reinforcement learning can be bypassed through model distillation. The report notes that OpenAI has already identified instances where models like DeepSeek may have trained on the outputs of other models to piggyback off existing safety work.

This lack of control creates a 'unifecta' of risks, where LLMs act as force multipliers for cyberattacks, fraud, and harassment. The report warns that because LLMs are complex, chaotic systems, even the most well-funded safety teams cannot yet guarantee that models will not produce harmful or violent content.