Researchers have unveiled I-DLM, a new Introspective Diffusion Language Model that matches the performance of traditional autoregressive models while significantly increasing generation speed.

For years, diffusion language models (DLMs) have struggled to compete with the quality of autoregressive (AR) models. While DLMs promise parallel token generation to bypass the sequential bottleneck of standard decoding, they have consistently lagged in reasoning and coding benchmarks.

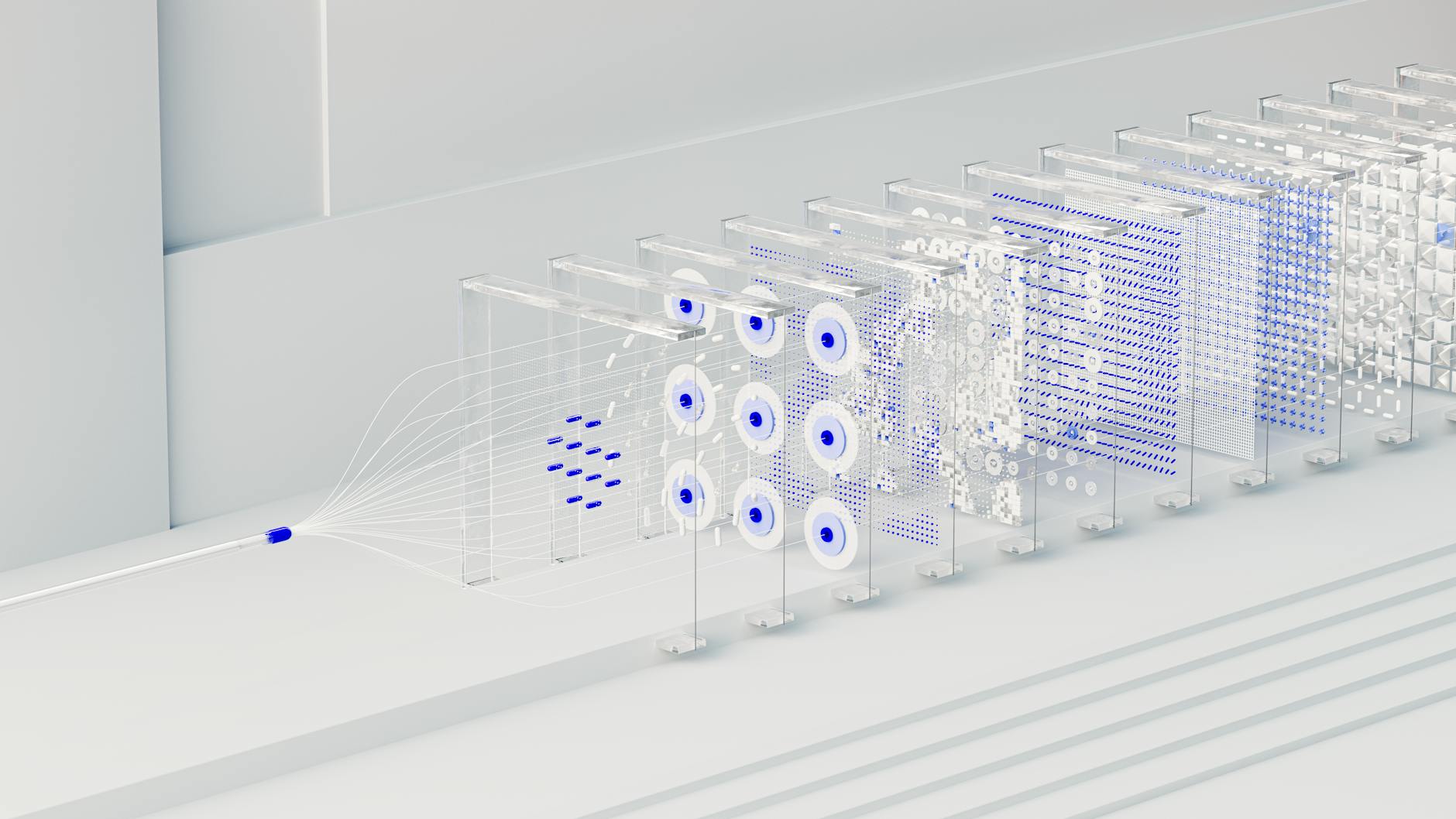

The developers of I-DML argue this gap is caused by a failure of 'introspective consistency,' where DLMs fail to agree with the tokens they generate. To solve this, the team introduced Introspective Strided Decoding (ISD), a method that verifies previously generated tokens while simultaneously advancing new ones in a single forward pass.

Efficiency and performance

In benchmark tests, the I-DLM-8B model became the first diffusion-based model to match the quality of its same-scale autoregressive counterparts. On the AIME-24 math benchmark, the 8B model scored 69.6, significantly outperforming the 16B LLaDA-2.1-mini, which scored 43.3.

Coding capabilities also saw a massive leap. The I-DLM-8B model outperformed the LLaDA-2.1-mini by 15 points on LiveCodeBench-v6. Despite having half the parameters, the I-DLM architecture maintains high accuracy across 15 different benchmarks, including MMLU and GSM8K.

Beyond raw intelligence, the architecture offers a massive advantage in throughput. Under high concurrency, I-DLM delivers 2.9x to 4.1x the throughput of standard autoregressive models. The researchers noted that while previous diffusion models like SDAR hit a performance plateau due to compute inefficiency, I-DLM's efficiency actually increases relative to AR models as concurrency rises.

The system is designed for easy integration, utilizing causal attention to allow direct deployment within existing frameworks like SGLang. The researchers also implemented 'Gated LoRA,' which enables bit-for-bit lossless acceleration, ensuring the model's output remains identical to high-quality autoregressive outputs.