On April 7, Z.ai officially launched its latest flagship model, GLM-5.1. Designed specifically for agentic engineering, the model marks a massive leap in coding capabilities over the previous GLM-5, setting new industry records across multiple benchmarks, including SWE-Bench Pro.

GLM-5.1’s performance in software engineering is particularly striking. According to data released by Z.ai, the model scored 58.4 on the SWE-Bench Pro benchmark, surpassing not only the 55.1 score of its predecessor, GLM-5, but also outperforming GPT-5.4 and Gemini 3.1 Pro. Unlike earlier models that often hit a performance plateau early in a task, GLM-5.1 demonstrates a superior ability to handle long-horizon objectives.

Solving the Long-Horizon Optimization Challenge

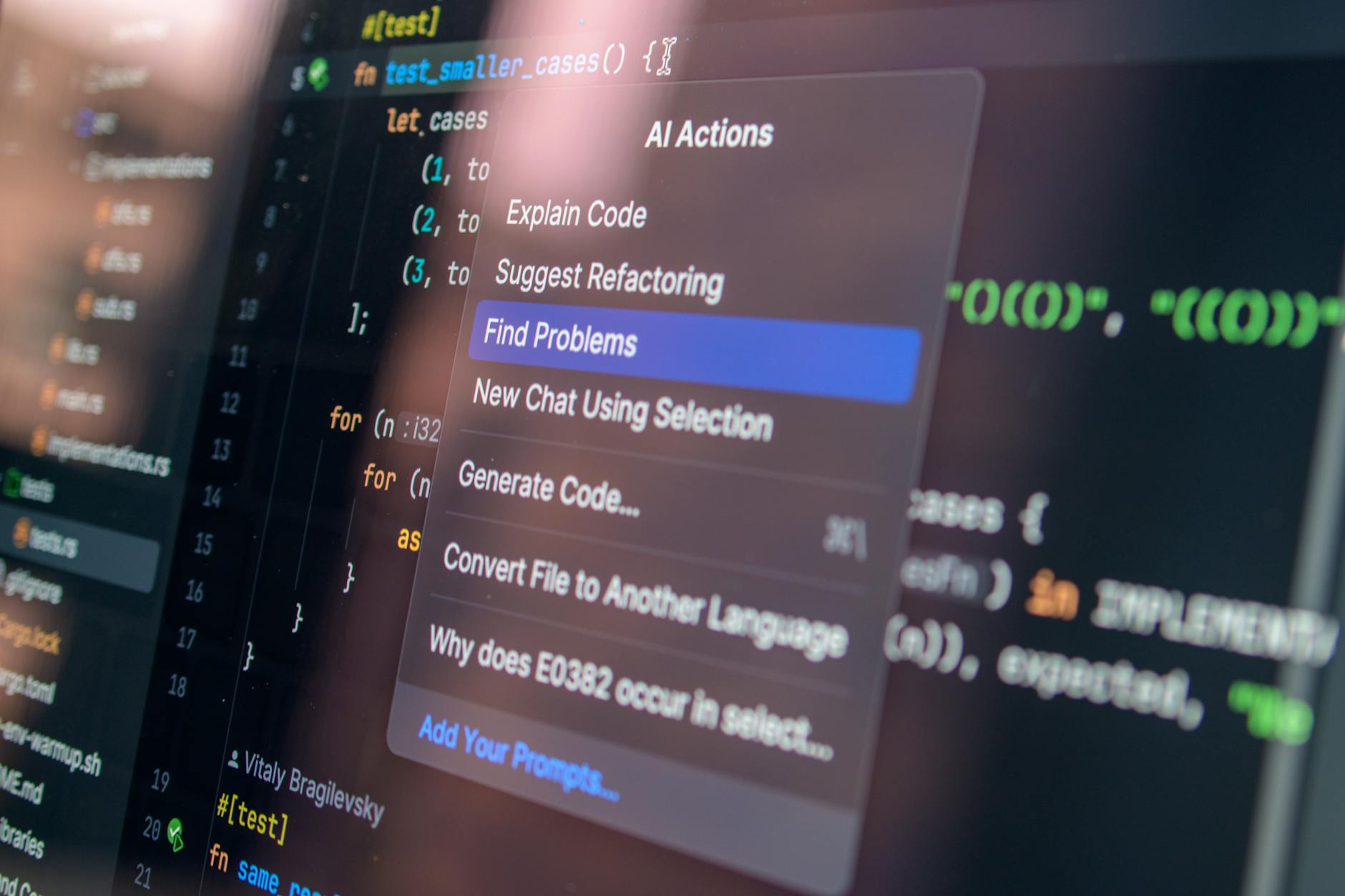

Z.ai’s R&D team noted that traditional models often struggle with complex coding tasks, failing to improve once they have applied initial, familiar techniques. In contrast, GLM-5.1 utilizes iterative reasoning and strategic refinement to continuously optimize results over hundreds of rounds and thousands of tool calls.

In vector database optimization experiments, researchers observed GLM-5.1 continuously improving over more than 600 iterations. The model did more than just write and test code; it autonomously identified performance bottlenecks and implemented structural adjustments, including shifting from full-table scans to IVF clustering and introducing a two-stage pipeline. Ultimately, the model pushed performance to 21.5k QPS—six times the best result achievable under a 50-round limit.

Beyond software engineering, GLM-5.1 displayed similar self-evolving capabilities in the machine learning-focused KernelBench. By autonomously analyzing benchmark logs, the model identified and resolved system-level bottlenecks, achieving performance gains that exceeded those of traditional compiler optimization tools.

GLM-5.1 is now available on GitHub and HuggingFace. Z.ai stated that the model’s effectiveness shows a clear upward trend as task duration increases, marking a critical step forward for artificial intelligence in complex, long-cycle engineering applications.