Researchers have recently introduced TriAttention, a novel KV cache compression technique designed to address the memory bottlenecks that large language models (LLMs) face during long-context inference tasks. By leveraging the stability of Query (Q) and Key (K) vectors within the pre-RoPE (Rotary Positional Embedding) space, the technology significantly boosts inference efficiency.

When handling large-scale, long-text generation, the KV cache often exhausts GPU memory rapidly, leading to model crashes. Existing compression methods typically rely on attention scores within the post-RoPE space; however, due to the nature of rotary positional encoding, these approaches often struggle to identify critical information, resulting in unstable inference.

The Stability Advantage of Pre-Rotation Space

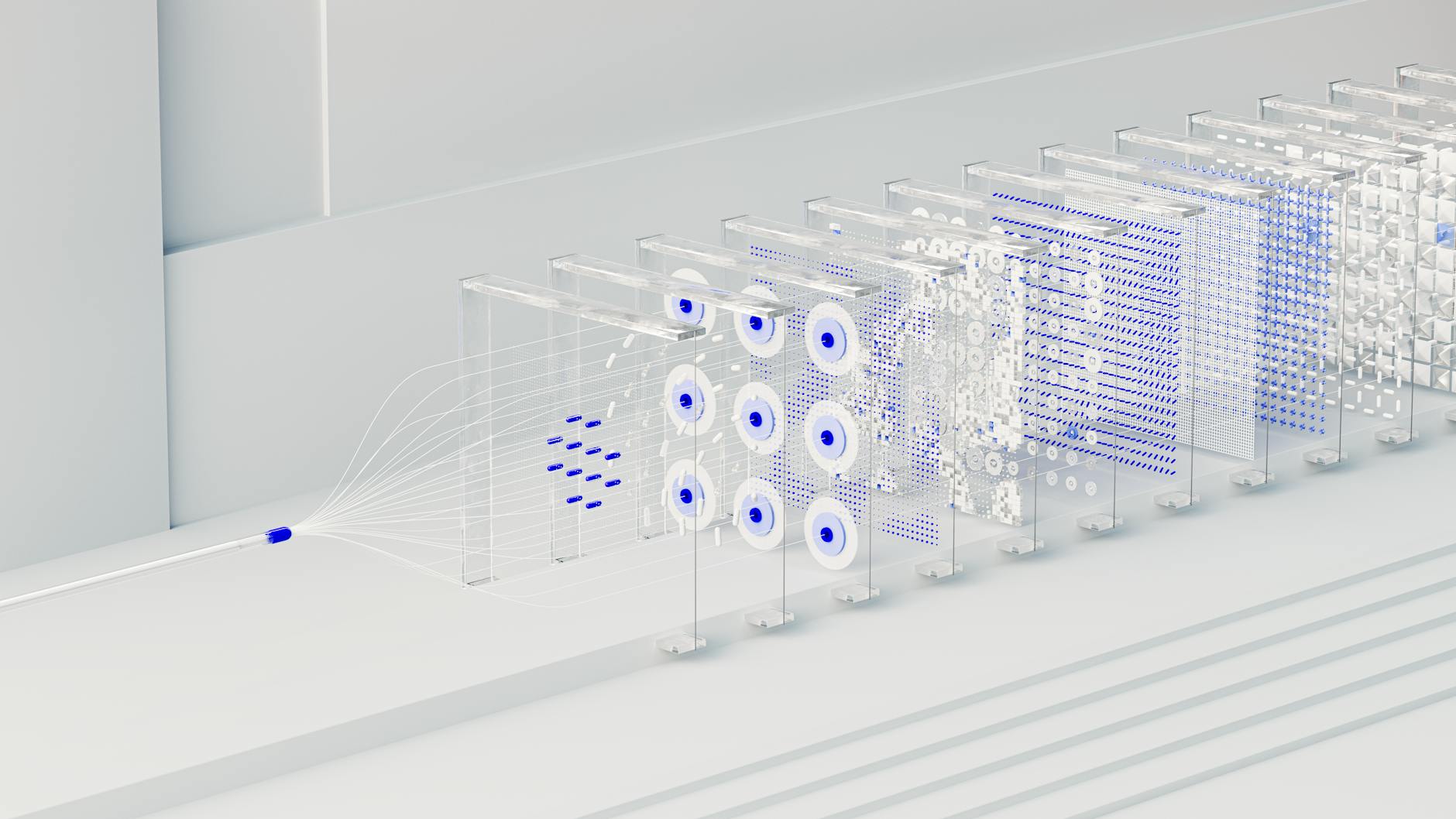

The research team discovered that in the pre-rotation space, Q and K vectors exhibit a concentrated trend around a fixed, non-zero center. This "Q/K concentration" phenomenon remains highly consistent across different input contexts and positions, allowing attention patterns to be predicted via trigonometric series. TriAttention builds on this finding by calculating distance preferences through trigonometric series and combining them with vector norms as auxiliary signals to precisely evaluate the weight of key values.

Empirical data demonstrates TriAttention's superior performance. On the AIME25 dataset, the algorithm achieved a 2.5x increase in throughput and a 10.7x reduction in memory usage, all while maintaining the same inference accuracy as full attention. In contrast, existing benchmark compression methods achieve only about half the accuracy of full attention at similar efficiency levels.

In practical deployment scenarios, the technology has proven highly valuable. When running a 32B parameter model on a GPU with 24GB of VRAM, the full attention mechanism failed due to out-of-memory (OOM) errors during long-instruction tasks, whereas TriAttention successfully completed the tasks in their entirety. In the MATH 500 benchmark, it reached an inference speed of 1,405 tokens per second, far outpacing the 223 tokens per second achieved by full attention.

The research was conducted by a team led by Mao et al., and the corresponding paper has been published as a preprint. By introducing an adaptive weighting mechanism, TriAttention can automatically adjust algorithm weights based on the concentration of attention heads, effectively lowering the computational costs of long-text processing in large models without compromising performance.