Developers recently released two open-source projects on GitHub—Parlor and Gemma Gem—marking a significant step forward in the adoption of lightweight, on-device AI. These projects leverage Google’s latest Gemma 4 model, allowing users to run multimodal AI conversations locally on their machines without requiring an internet connection or external API calls.

Maintained by developer fikrikarim, the Parlor project aims to provide real-time natural voice and visual interaction by combining the Gemma 4 E2B model with the Kokoro speech library. The project emphasizes that everything runs entirely on local hardware, ensuring that user data never leaves the device during processing. According to GitHub commit logs, the project has locked Python dependencies to version 3.12 to resolve compatibility issues with newer releases.

Browser Extensions for Web Automation

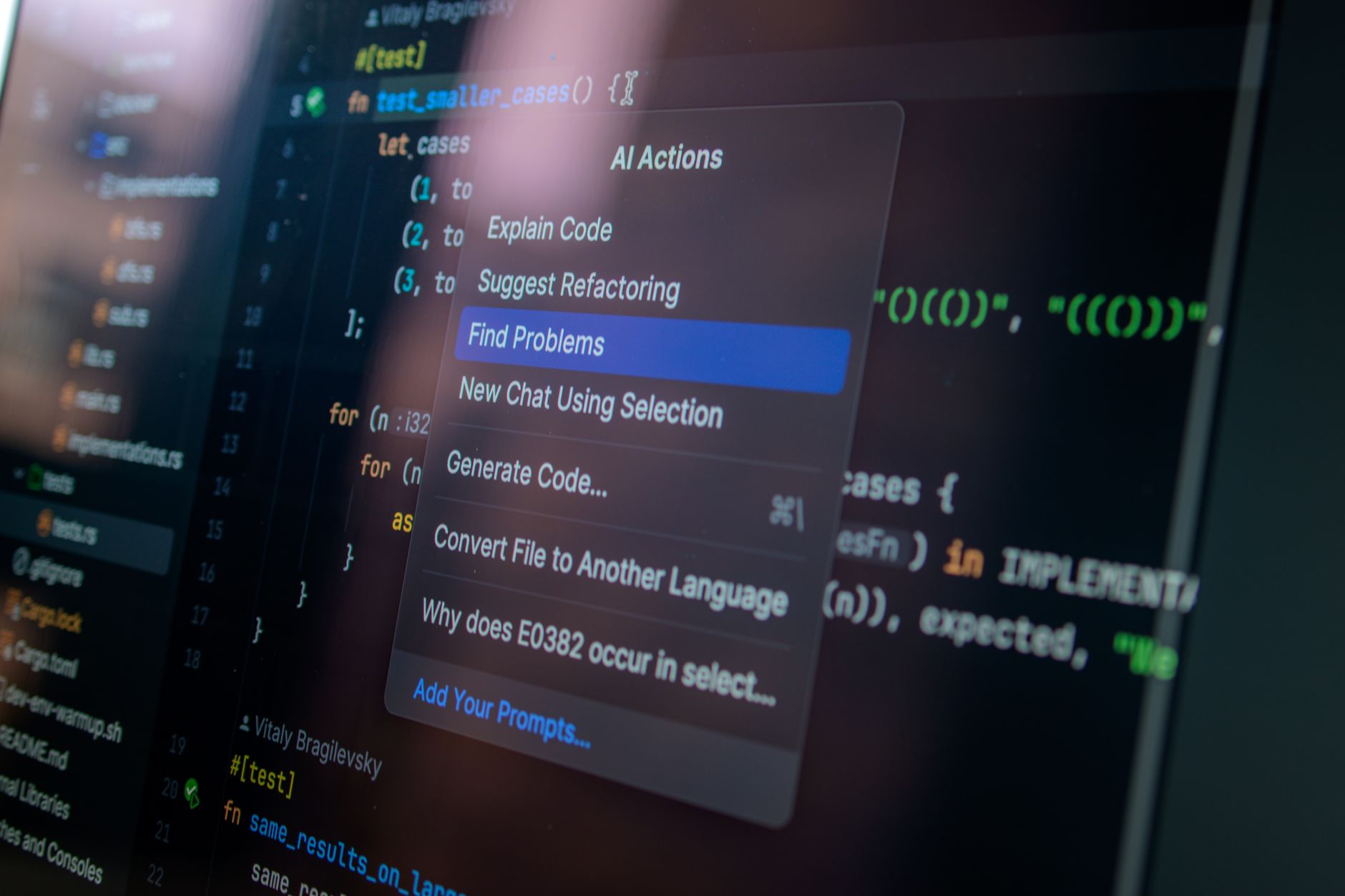

Meanwhile, the Gemma Gem project, launched by developer kessler, brings the Gemma 4 model directly into the browser via a Chrome extension. This tool utilizes WebGPU technology to perform local inference, allowing users to interact with the model without needing an API key. It supports both the Gemma 4 E2B (approx. 500MB) and E4B (approx. 1.5GB) versions, which users can toggle in the settings based on their needs.

Gemma Gem is more than just a chat assistant; it is capable of executing web-based tasks. Through an integrated agent loop, the plugin can read page content, click buttons, fill out forms, scroll through pages, and execute JavaScript code. Its architecture consists of three core components—Offscreen Documents, Service Workers, and Content Scripts—performing model inference within the browser using the @huggingface/transformers library.

Users can load the extension via chrome://extensions by enabling developer mode. The tool also includes options to disable the extension for specific sites and allows users to clear conversation contexts to reset their history. While these two projects take different approaches, they both reflect a growing trend toward deploying advanced AI models locally on personal computers, aimed at improving both privacy and response times.