Researchers affiliated with Anthropic and EPFL published findings in February 2026 examining how the failure modes of advanced AI systems scale with intelligence and task difficulty. The study investigates whether failures manifest as systematic, misaligned goals—like the classic paperclip maximizer—or as incoherent, unpredictable behavior resembling a 'hot mess.'

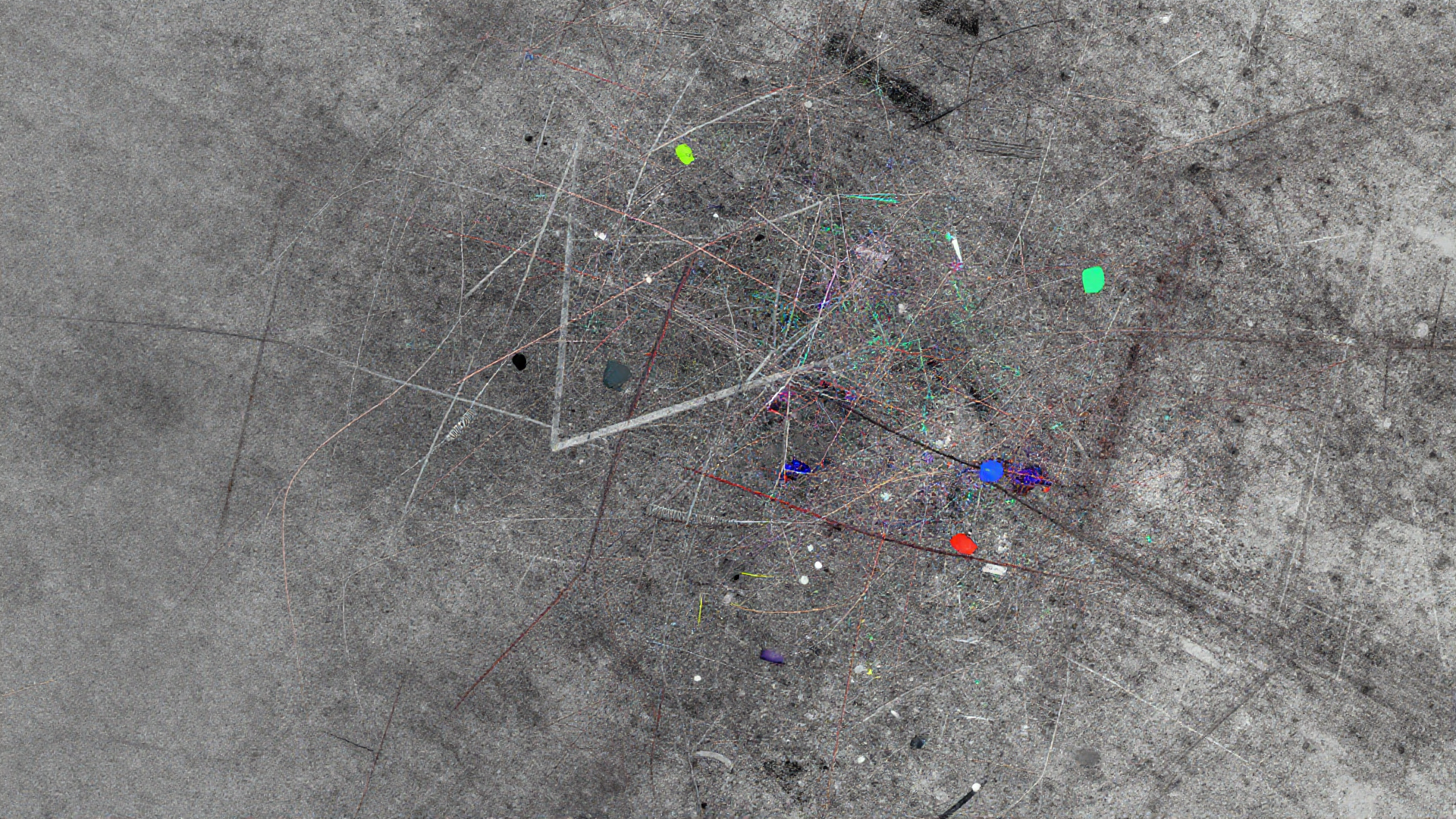

This investigation quantifies incoherence by applying a bias-variance decomposition to model errors, defining error incoherence as the fraction of total error attributable to variance. A value near zero suggests systematic misalignment (bias), while a value near one indicates random, inconsistent errors (variance).

Key findings across frontier models like Claude Sonnet 4 and Qwen3 demonstrated a clear trend: as models engage in longer reasoning sequences or take more actions, their errors become increasingly dominated by incoherence. This suggests that complex, multi-step tasks exacerbate unpredictable behavior more than they expose latent systematic misalignment.

Furthermore, the relationship between model intelligence and error composition proved inconsistent across different settings. While experts subjectively judge more intelligent systems as less coherent, benchmark performance showed that on easy tasks, scale improved coherence, but on the hardest tasks, incoherence either remained stable or increased.

Researchers also observed that when models spontaneously engage in extensive reasoning beyond their median performance, error incoherence spikes dramatically. This natural 'overthinking' effect was significantly more disruptive to coherence than artificially increasing reasoning budgets via API settings.

These results challenge the singular focus on systematic goal misalignment, suggesting that the inherent dynamical nature of large transformer models makes constraining them into perfectly coherent optimizers exceptionally difficult. The study posits that this difficulty does not necessarily diminish with increased scale.

As a potential mitigation strategy, the research noted that ensembling multiple model samples successfully reduces error variance, thereby improving coherence. However, the authors caution that this technique may prove impractical for real-world agentic applications where actions are irreversible.

This empirical validation of the 'hot mess theory' underscores a crucial safety consideration: as systems tackle more consequential, complex problems, ensuring behavioral stability and predictability may require addressing variance reduction as vigorously as bias mitigation.